$ canonry --help

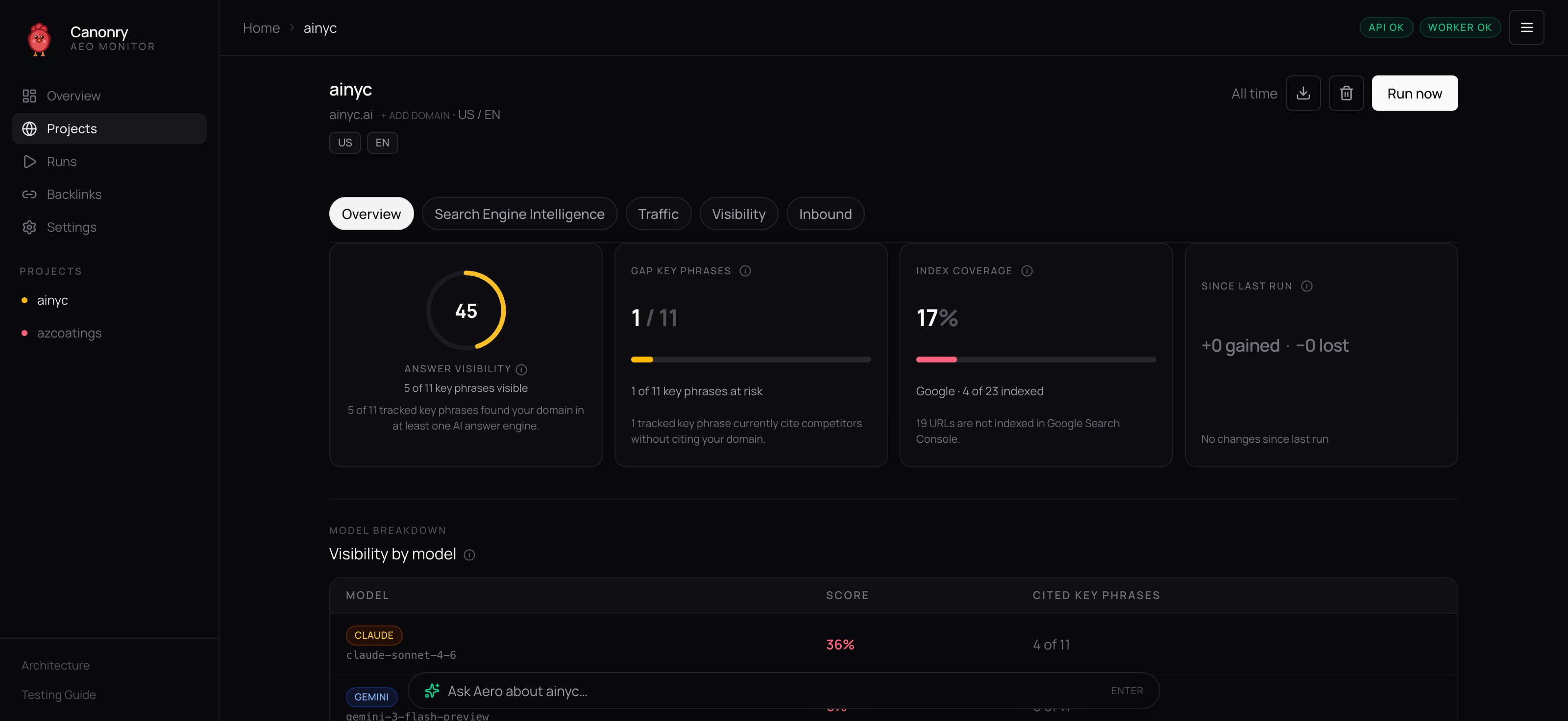

The operating system for AEO.

Canonry's agent tracks how ChatGPT, Gemini, Claude, and Perplexity cite your pages, finds the gap, and ships the fix. All locally; all open source.

$ brew install ainyc/canonry/canonryThen canonry init && canonry serve. Need another option? All install options →